Based on previous exercises, we can wind up the whole case analysis in two basic partsĪ) If we get 1st, 2nd, 3rd.,n'th tail as the first tail in the experiment, then we have to start all over again.įor the 1st flip as tail, the part of the equation is (1/2)(x+1)įor the 2nd flip as tail, the part of the equation is (1/4)(x+2)įor the k'th flip as tail, the part of the equation is (1/(2 k))(x+k)įor the N'th flip as tail, the part of the equation is (1/(2 N))(x+N)

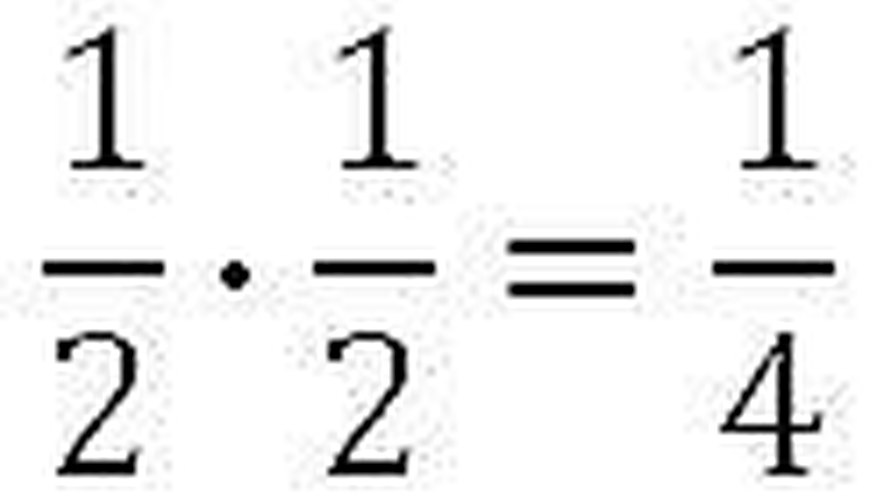

Let the exepected number of coin flips be x. (Generalization) What is the expected number of coin flips for getting N consecutive heads, given N? Thus, the expected number of coin flips for getting two consecutive heads is 6. The probability of this event is 1/4 and the total number of flips required is 2. If the first flip is a heads and second flip is also heads, then we are done. The probability of this event is 1/4 and the total number of flips required is x+2Ĭ. If the first flip is a heads and second flip is a tails, then we have wasted two flips. The probability of this event is 1/2 and the total number of flips required is x+1ī. If the first flip is a tails, then we have wasted one flip. Let the expected number of coin flips be x. What is the expected number of coin flips for getting two consecutive heads? Thus the expected number of coin flips for getting a head is 2. Using the rule of linerairty of the expectation and the definition of Expected value, we get The expected value x is the sum of the expected values of these two cases. But we have already wasted one flip, so the total number of flips is x+1. Since consecutive flips are independent events, the solution in this case can be recursively framed in terms of x - The probability of this event is 1/2 and the expected number of coins flips now onwards is x. If the first flip is the tails, then we have wasted one flip. The probability of this event is 1/2 and the number of coin flips for this event is 1.ī. If the first flip is the head, then we are done. What is the expected number of coin flips for getting a head? Random Experiments with two or more Characteristics The new concepts which will be introduced to you in this tutorial Properties of CDF, Product Moments, Central moments, Non Central momentsġ. Uniform distributions, Exponential distributions, Gamma distributions, Normal distributions, Lognormal distributions Introductory Probability- Compound and Independent Events, Mutually Exclusive Events, Multi-Stage Experiments Application of Probability and a quick example of a Probability Distribution, Random Variables and Sample Spaceīasic Probability Definitions - Experiment, Random Experiment, Sample Space, Baye's Theorem, Independent Events Dealing with Random Variables Chebyshev’s Inequalityĭiscrete and continuous distributions:Bernoulli trials, Binomial distributions, Geometric distributions, Negative binomial, Hypergeometric distributions, Poisson distribution, The rule of "linearity of of the expectation" says that E = E + E. For example, in a dice-throw experiment, the expected value, viz 3.5 is not one of the possible outcomes at all. It is important to understand that "expected value" is not same as "most probable value" - rather, it need not even be one of the probable values. For a continuous variable X with probability density function P(x), the expected value E is given by ∫ xP(x)dx. For example, for a dice-throw experiment, the set of discrete outcomes is and each of this outcome has the same probability 1/6. Mathematically, for a discrete variable X with probability function P(X), the expected value E is given by Σ x iP(x i) the summation runs over all the distinct values x i that the variable can take. Mathematical Expectation is an important concept in Probability Theory. This tutorial attempts to throw some light on this topic by discussing few related mathematical and programming problems. "Mathematical Expectation" is one of those few topics that is rarely discussed in details in any curriculum, but is nevertheless very important.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed